- #How to install apache spark on mac how to#

- #How to install apache spark on mac manual#

- #How to install apache spark on mac free#

- #How to install apache spark on mac mac#

Here we will be describing all the various methods

#How to install apache spark on mac how to#

So, let’s proceed… Tutorial on How to Uninstall Apache? And, we will be sharing the methods one by one In that case, we have put together a list of multiple methods Still, you might not want to use Apache and want To add to that, Apache provides interfaces for programming languages like Python, PHP, Tell, Perl, etc. Apache was written in C and XML.Īlso, Apache HTTP Server supports TLS and SSL protocols. It is compatible with Microsoft Windows, Unix-like, and OpenVMS. Robert McCool was the main developer of the Apache HTTP Server. Since the beginning, it has become the most popular and most used HTTP client on the web.

#How to install apache spark on mac free#

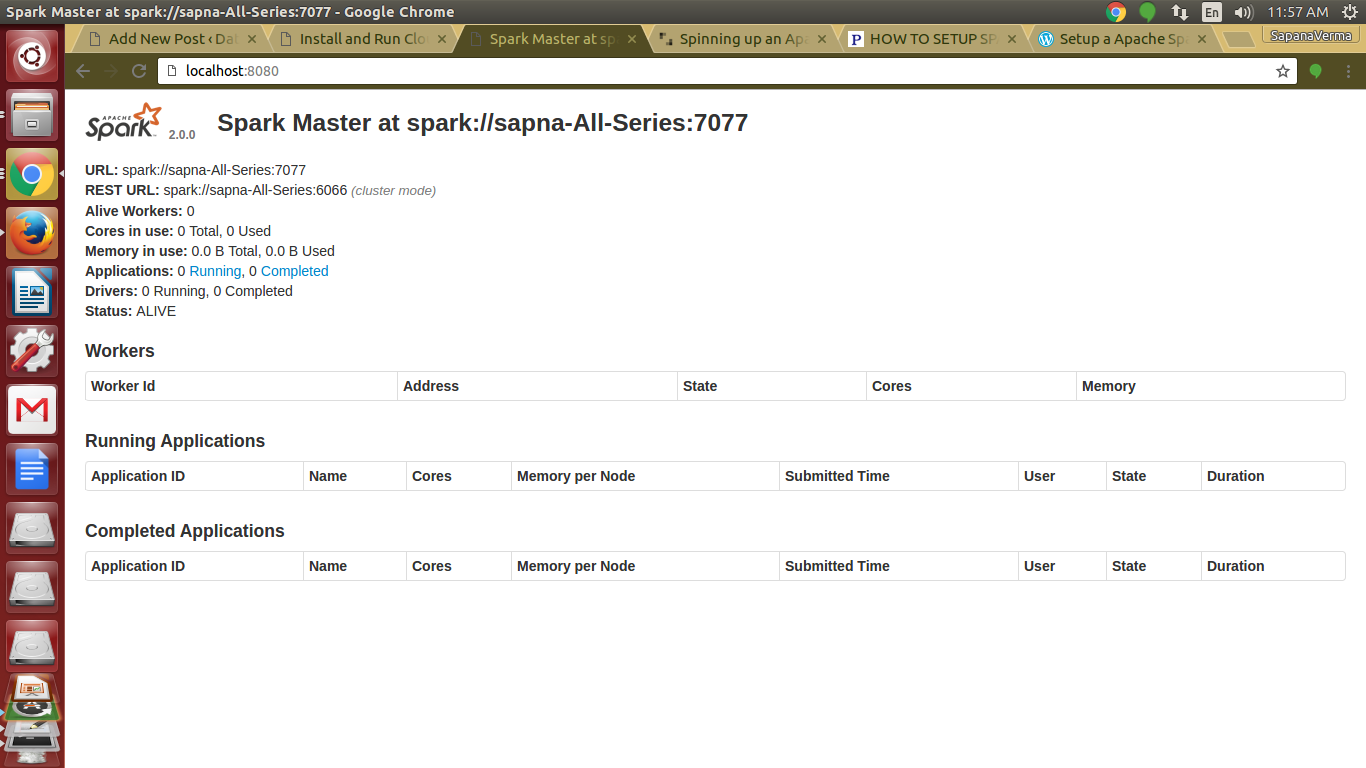

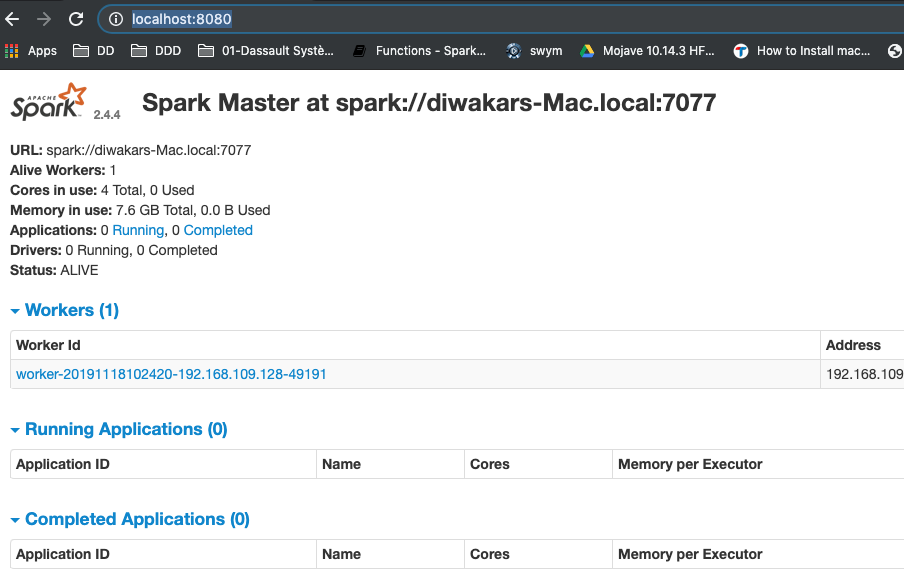

The best part about Apache is that it is a free and open-source web server. Because in this post, we will be sharing multiple processes of uninstalling Apache Service.Īpache HTTP Server is one of the world’s most popular web servers available out there. In second command prompt run /bin/spark- class.cmd .worker.Worker spark://127.0.0.If you don’t know how to uninstall Apache, then you have come to the right palace. In one command prompt window run /bin/spark-class.cmd .master.Master For getting up and running with your cluster on windows use the On WindowsĪbove scripts will not be able to start the cluster on windows. This type of cluster setup is called standalone cluster. Under workers section in the master UI should be seeing two worker instances with their worker ids. Now we need to start the slaves, /sbin/start-slave.sh spark://127.0.0.1:7077. Lets start the master first now by running /sbin/start-master.sh and If you can access then your master is up and running.

We will see more on what Worker, Executor etc are? Executor and worker memory configurations are also defined here. template to slaves and spark-env.sh respectively. SPARK_WORKER_INSTANCES here will give us two worker instances on localhost machine.

Note: Both slaves and spark-env files will be already present in the conf directory, you will have to rename them from. Open /conf/slaves file in a text editor and add “localhost” on a newline.Īdd following to your /conf/spark-env.sh file:Įxport SPARK_WORKER_DIR=/PathToSparkDataDir/

#How to install apache spark on mac manual#

Once you have the installed the binaries either using manual download method or via brew then proceed to next steps that will help us setup a local spark cluster with 2 workers and 1 master. Setup the SPARK_HOME now: vi ~/.bashrcĮxport SPARK_HOME=/usr/loca/Cellar/apache-spark/$version/libexecĮxport PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin You spark binaries/package gets installed in /usr/local/Cellar/apache-spark folder. If you have brew configured then all you need to do is just run: brew install apache-spark We will setup a cluster which has 2 slave nodes. We will need the spark cluster setup as we will be submitting our Java Spark jobs to the cluster. Again, there are plenty of good blogs covering this topic, please refer one of them. If you wish to run your pom.xml from command line then you need it on your OS as well. You are good if you have Maven installed in your Eclipse alone.

#How to install apache spark on mac mac#

There are plenty of Java install blogs, please refer one of them for installing and configuring Java either on Mac or Windows.Īs we will be focussing on Java API of Spark, I’d recommend installing latest Eclipse IDE and Maven packages too. Scala install is not needed for spark shell to run as the binaries are included in the prebuilt spark package. spark-shell.cmd and If everything goes fine you have installed Spark successfully. spark-shell and you should be in the scala command prompt as shown in the following pictureįor windows, you will need to extract the tgz spark package using 7zip, which can be downloaded freely. Either double click the package or run tar -xvzf /path/to/yourfile.tgz command which will extract the spark package.